Simple Questions for Complex Problems

How Organizational Blindness Turns Pressure into Catastrophe

It’s easy to be a Monday morning quarterback and criticize BP, Halliburton, Transocean, Schlumberger, and the MMS for the tragedy at Deepwater Horizon. They were skilled professionals working in a dangerous, high-risk operation with tremendous stakes. All the organizations involved made a series of preventable and compounding bad decisions that culminated in the explosion, the deaths of 11 people, and the catastrophic contamination of the Gulf of Mexico.

My purpose is neither to criticize nor second-guess decisions made at Macondo. Rather, it is to analyze the organizational dynamics that led to the tragedy and propose a framework that similar organizations can use to prevent the next one. The recent roll back of environmental findings makes the internal governance of energy producers particularly urgent.

The managers working on the Macondo well were under tremendous pressure to finish their work. Behind schedule and burning cash, they ignored warnings and skipped steps that, in isolation, weren’t disastrous. Taken together, they were catastrophic.

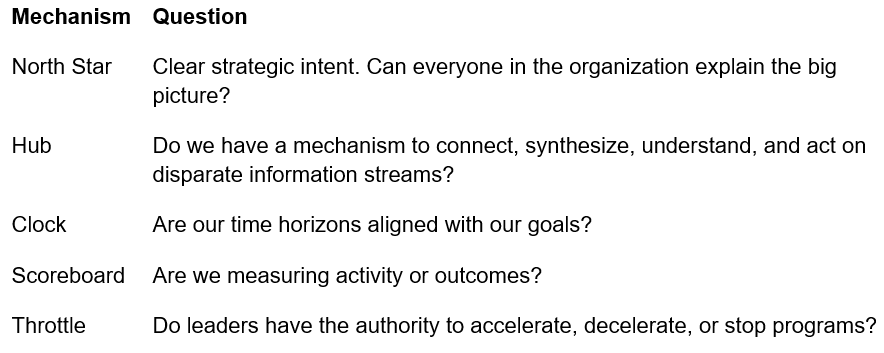

We’ve all been there. Projects go off the rails and the scrutiny intensifies. The pressure magnifies the organizational dynamics that create peripheral blindness and lead to questionable decisions. Last week I described The Loop, a model of organizational failure that prevents organizations from acting on information they already have. It emerges through three failure modes: synthesis failure, misaligned incentives, and institutional capture. This week I’m applying a Loop breaking framework that asks five simple questions.

At Macondo, the answer to all five was no.

The Macondo well was a billion-dollar asset. BP, Transocean, Halliburton, and Schlumberger treated it like a construction project running late. That distinction is the entire story.

Start with the North Star. A team aligned around the safe extraction of the maximum value of crude oil doesn't skip a $128,000 cement bond test. They don't ignore anomalous pressure readings from the negative pressure test. The mission had been quietly replaced by a different one: get the well online this week. Nobody said so out loud. Nobody had to.

Which raises the Hub question. Was there a single person or business unit responsible for synthesizing what BP knew, what Transocean knew, what Halliburton knew, into a unified picture of risk? The commission answers that directly. Information flowed in vertical stovepipes rather than horizontally across organizations. People made decisions without fully comprehending their connection to other critical problems unfolding across the operation. The warning signs were all there. No architecture of shared consciousness existed across the disparate organizations to connect them before the obvious tradeoffs between speed and safety became fatal.

The team at Macondo was the Sundance Kid, perched at the top of a cliff, worried about whether he could swim. Butch had to explain it to him. It's not the water that's going to kill you. It's the fall. At Macondo, nobody was Butch.

The Clock failure made it worse. They were using a stopwatch to track daily expenses on a well expected to produce for decades. Someone inside that organization should have been able to say: we are burning $1 million a day on a well that will generate $1 billion over its lifetime. The overrun is noise. The asset is the signal. BP’s annual revenues exceeded $260 billion. Had the Macondo team run a full year over schedule, the overrun would still have been immaterial. Nobody reframed it that way because nobody was looking at the right clock.

Time horizons shape metrics and metrics shape behavior. The commission found that the relentless demand to bring production online caused the team to define success by the daily schedule rather than the long-term value of the asset. They were keeping score on the wrong game entirely.

And when it all came apart, nobody hit the kill switch. Theoretically, every worker on that rig could stop operations for safety. In practice, the Commission found no evidence of a top-down safety culture that made that real. BP’s well site leaders and Transocean managers had the positional authority to slow down, but no formal risk analysis system gave anyone the full picture required to use it.

The MMS, the regulator with actual legal authority to halt operations, had been captured. The royalty payments flowing from the oil industry to the MMS created a regulator that was financially dependent on the industry it was supposed to police. The MMS measured itself by royalty generation rather than by the rigor of its enforcement regime. The green light was always on.

Five mechanisms. All five failed. Eleven people died.

There is no way to say definitively that this framework would have prevented the accident at Macondo. Deep water drilling is one of the most complex industrial endeavors on earth and is inherently dangerous. I’m not pretending otherwise. But it is clear that it would have surfaced the risks to a centralized authority and reframed the problem before it was too late.

Deepwater Horizon is not an isolated case. The pattern repeats. NASA launched the Challenger despite warnings from Morton Thiokol about the O-ring performance in extreme cold temperatures. Boeing rushed the 737 Max to market to compete with Airbus, suppressing internal safety concerns until two crashes killed 346 people. The American war in Afghanistan persisted across 20 years and four presidential administrations despite misgivings from senior leaders in the White House and Pentagon that emerged within months of the invasion.

Organizations designed for the speed of information flows in the 1950s are simply not equipped for a world where information travels exponentially faster across globally extended enterprises. In the weeks ahead we’ll look at the role speed and complexity play in failure, and how organizations can ensure that information flows horizontally to enable a complete and coherent picture of risk.